Clearly I watch too many robot movies. Terminator, I Robot… you name it. But some of what these guys are saying makes sense.

“I say robot, you say no-bot!”

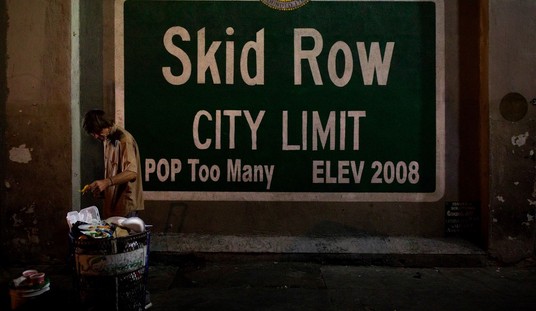

The chant reverberated through the air near the entrance to the SXSW tech and entertainment festival here.

About two dozen protesters, led by a computer engineer, echoed that sentiment in their movement against artificial intelligence.

ALL SXSW: Stories, videos and photos

“This is is about morality in computing,” said Adam Mason, 23, who organized the protest.

Signs at the scene reflected the mood. “Stop the Robots.” “Humans are the future.”

We’ve had a lot of fun with this topic at times, particularly on weekends when the news cycle was rather slow, but I’d like to find out a bit more about how our readers feel when the subject is peeled back to the raw flesh underneath. Is this an issue for any of you?

We’ve seen concerns raised by people of consequence, including Stephen Hawking, Elon Musk and dozens of other leading scientists. Is it really that crazy? I’m honestly curious as to how the general public views this. On the one hand we have the old fashioned view of the Luddites, which I’m more than a little sympathetic to. The machines we create will always be more efficient than the poor humans swinging an ax at the ore in the mines, so there is a price to pay for progress. The old school thinking relied on an assumption that we would simply create new jobs for people who built and maintained the machines. But what happens if the machines are too smart to need the humans for anything beyond refilling the gas tank? Or if they eliminate the need for gas?

This is old world thinking, I’m sure, but not every idea from the old world was crazy. There have been more than a few doomsday theories sunk into the general consciousness. A superbug. A breakdown of civil society. How about artificial intelligence taking on a role such as was seen in The Forbin Project?

Is it really all that crazy? I think the only answer which delivers a firm “no” comes in the form of assuming that we are incapable of doing the work of God and making a thinking machine. If that’s the case, then there’s nothing to worry about because AI is essentially impossible. But if not, isn’t it almost inevitable? And if so… should we take the plunge? Your thoughts on the subject will be most welcome.

Join the conversation as a VIP Member