Twitter has a problem with racism, but this time we’re not talking about their efforts to censor all of President Trump’s tweets for hidden white supremacy or Q-Anon hand signals. To invoke (and paraphrase) a favorite old movie from my youth, the racism is coming from inside the house!

This supposedly isn’t a case of a bunch of Twitter employees getting up to racist hijinks, however. Or at least not according to to the powers that be at the social media giant. Their automated software appears to have a problem with Black people. How do we know? They use an Artificial Intelligence algorithm to create cropped preview images of pictures that users tweet out, but if the photo includes both White and Black people, the algorithm has a tendency to crop out the Black face and focus in on the White one for some reason. But they assure us that they’re hard at work on the problem and will come up with a resolution soon. (NY Post)

Twitter is examining why its artificial intelligence sometimes cuts black people’s faces out of photos.

The social-media giant uses technology called a neural network to create the cropped previews of photos that users see as they scroll through their feeds. But users discovered that the system often hones in on white faces when they’re pictured in the same image as black faces.

“We tested for bias before shipping the model and didn’t find evidence of racial or gender bias in our testing, but it’s clear that we’ve got more analysis to do,” Twitter spokeswoman Liz Kelley tweeted Sunday after users pointed out the issue, which was reported earlier by The Verge. “We’ll open source our work so others can review and replicate.”

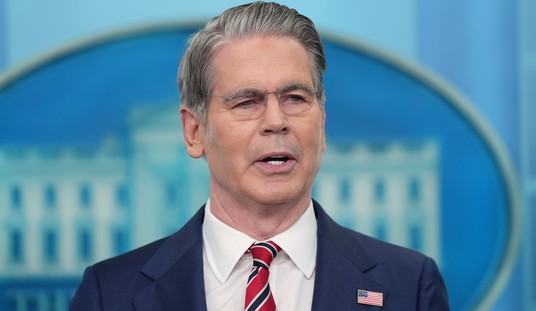

I’ll confess that this sounded a bit far-fetched to me at first glance, but there are other examples of similar phenomena in the tech world and Twitter’s own testing seems to bear out the claim. Here’s one of their software engineers (with the clever and not-at-all biased user name of “Tony Abolish (Pol)ICE Arcieri”) conducting a test. He took a picture that showed Barack Obama and Mitch McConnell in the same shot and ran it through to see how the algorithm would crop it. It picked McConnell every time, even when he switched the color of their ties.

Trying a horrible experiment…

Which will the Twitter algorithm pick: Mitch McConnell or Barack Obama? pic.twitter.com/bR1GRyCkia

— Tony “Abolish ICE” Arcieri 🦀 (@bascule) September 19, 2020

Here’s another test where someone tried the same experiment with two cartoon characters from the Simpsons, one White and one Black. Sure enough, they got the same results.

I wonder if Twitter does this to fictional characters too.

Lenny Carl pic.twitter.com/fmJMWkkYEf

— Jordan Simonovski (@_jsimonovski) September 20, 2020

As I mentioned above, there’s certainly precedent for this, but the way that the woke left will react to this news will almost certainly be different. We’ve known for a while now that facial recognition software, particularly Amazon’s products, are terrible at recognizing minorities and women. When trying to match the faces of White males, it can hit a better than 95% accuracy rate. But when looking at Black or Latino males or any woman, the error rates rise. When you get around to testing the system using pictures of Black women the success rate falls into the 30s.

Is that because the people who designed the software are a bunch of racists? That’s what many opponents of facial recognition will tell you. But the reality is that the software is looking for clues in the shapes of facial features, the color of hair and eyes and a slew of other data points to build a unique profile of the face. And, at least according to one study, the unique features the software is tought to look for are far more variable in White people, including the shape of the nose, the range of eye and hair colors, and other features. The fewer unique features it’s able to tag in any given face, the higher the error rate.

I’m guessing (and it’s only a guess) that a similar issue is what’s plaguing Twitter’s image cropping algorithm. But facial recognition software has been getting more accurate lately, so maybe they’ll be able to clear this issue up as well.

Join the conversation as a VIP Member